Technical demo: Bibliotheca

A downloadable game for Windows and Linux

Bibliotheca is exclusively compatible with Love2D 12.0, and will not run on prior versions. Download official Love2D 12.0 builds from the Love2D github:

You need to be logged in to a Github account to use either of these links. The Love2D project does not provide alternative download links for 12.0.

Unmodified copies of these downloads are also uploaded on the project itch.io page for people who do not wish to log in to Github to download Love2D.

System requirements

Operating Systems

- Windows (x86-64)

- Linux (x86-64)

macOS is not supported due to missing OpenGL 4.3 support. All other Operating Systems and CPU architectures not listed above are not supported.

Graphics unit

OpenGL 4.3 support is mandatory

AI use prohibited

AI was not used at any point for any aspect of the development of Bibliotheca.

Images, music, art, assets

All game assets (including all voxel cube face textures) were made by the below credited contributors, and were made during the jam period, with two exceptions:

- Font: terminus-font

- Font: Uncial Antiqua

Team/Credits

| fnicon | game programmer |

| purist | 3D engine programmer, 3D rigger/animator/unwrapper |

| Shiroiii | game director, legendary composer, sound engineer, texture artist |

| Lynod | lore/world builder, screenplay, storyboarder, voice acting coordinator |

| RedSG | 3D character modeler/animator |

| Pixel Jamp | art |

| (uncredited by request) | character concept/reference artist, 3D character modeler |

Note: Some of the credited work does not appear in the game demo release, but is scheduled for the next/full Bibliotheca release.

Technical Commentary

Note that all code is written for the undocumented Love2D version 12.0 APIs. Generally, as often as practical, all code is explicitly incompatible with 11.5 and lower.

Custom pixel and vertex shaders

| commit | love-demo @ 0a423f85 |

The general strategy is to replace Love2D's default (and highly

restrictive) TransformProjectionMatrix with an arbitrary

transformation matrix, to allow arbitrary three-dimensional rotations and

perspective projection.

Three-dimensional vertex attributes are specified via the Love2D

12.0 love.graphics.newMesh API, using an overload not implemented

in 11.5 (nor documented in the Love2D wiki).

0a423f85

produces the following (admittedly superficially unexciting) image:

3D meshes and 3D rotation matrices

| commit | love-demo @ 0a423f85..955a3e09 |

At the end of this commit sequence, I generated binary vertex attribute data

containing position, normal, and texture coordinates in

the position_normal_texture.vtx file. This is deliberately in

precisely the form that is convenient for direct upload to graphics unit video

ram.

The (Love2D 12.0) vertex attribute declaration is also updated to account for the additional vertex attributes, and I hastily wrote functions to produce projection, view and rotation matrices.

955a3e09

produces the following image:

Matrix/vector math

| commit | love-demo @ 955a3e09..64d959ed |

Love2D doesn't include any math libraries that can operate on three-dimensional vectors, nor on homogenous matrices (at least not in a useful way). I did see that there are a few Lua "vector math" libraries, but I decided all existing options either either did not include enough of the operations I needed, or ostensibly contained roughly correct range of features, but are in fact secretly an exercise in overly-fancy Lua metaprogramming more than anything else.

I instead decided to write my own Lua matrix/vector math library--it is not the first time I have done such a thing.

I initially had an "amazing idea" to store the matrix and vector data

directly in Love2D ByteData

objects, the thinking being that there are (in fact) fewer intermediate

transformations that occur when the matrices are passed to the shader via the

Love2D Shader:send() API.

However, I later, some time after these commits, discovered that this

ByteData idea is roughly ~700x slower

than storing matrix/vector data in luajit C structs.

In the same commit series, I also started uploading and drawing textures via

the new/undocumented Love2D 12.0 love.graphics.newTexture API.

64d959ed

produces the following image:

Collada scene representation

| commit | love-demo @ 64d959ed..bef01af5 |

Collada is a quite well-designed (and sadly abandoned) file format for generalized description of three-dimensional scenes.

This code is mostly a 1-to-1 translation of the original version I wrote in C++ to Lua, though with a few minor improvements I spontaneously inserted during translation with the power of hindsight.

The general pattern is that a Collada (.dae) file (as exported from a 3D modeling tool) is preprocessed by a python script to a form that is easier to use in a real-time rendering context. Specifically, the scene metadata in scene/test/test.lua was not hand-written, but was instead generated by collada/main.py.

bef01af5

produces the following image:

Vertex pulling

| commit | love-demo @ 12d2f8ff |

While authoring the previous commits I decided that the Love2D's Mesh is an awkward and "quirky" API design, and that I should find a way to avoid using it entirely.

My primary complaint

is in the Mesh's inflexibility in allowing for specification of arbitrary

per-draw index buffer offsets and vertex buffer offsets, as

in glDrawElementsBaseVertex

or IASetIndexBuffer/IASetVertexBuffers.

However, there are no other APIs that Love2D exposes that allow one to define and upload vertex attributes.

Fortunately, it is possible to avoid using vertex attributes entirely via the new APIs introduced in Love2D 12.0:

love.graphics.newBufferto create Shader Storage Buffers- a new overload for

Shader:sendto bind Shader Storage Buffers love.graphics.drawFromShader(the only way to initiate indexed rendering with no Mesh object)

This "vertex pulling" approach allows me to specify whatever offset arithmetic I desire via a combination of arbitrary vertex shader code and arbitrary uniforms.

12d2f8ff

is graphically identical to the previous commit, albeit with all former uses

of Mesh entirely removed.

Scene-specified node (object) animation

| commit | love-demo @ 12d2f8ff..e5148ac4 |

A prelude to vertex skinning ("armatures"), I next implemented support for Collada -scene-specified animations. These are internally expressed as a sequence of cubic bezier curves, a parametric system of equations defined by four "control points".

Because I have chronically not given myself enough time to understand and implement De Casteljau's algorithm (or one of the myriad fancier cubic equation solving algorithms), I instead solve Bezier equations using a naive binary search. This appears to be very much sufficiently performant, even when evaluated by luajit.

e5148ac4

produces the following image, which also demonstrates bezier tangent -smoothed

transitions between a relatively small number of keyframes (~5).

Skinned vertex animation

| commit | love-demo @ e5148ac4..8d8c87c5 |

This was (intentionally) a relatively simple change to implement on top of

the previous animation code. The primary change is the introduction

of skinned_vertex.glsl.

This uses a

new VertexJWLayout Shader

Storage Buffer containing per-vertex joint indices and joint weights,

applies a weighted influences on each vertex from the Joints

uniform. Joints is an array of transformation matrices, one

transform per joint in the skeleton.

Referencing joint weights and joint indices from a shader storage buffer rather than vertex attributes also continues the pattern of deliberately avoiding the Mesh API.

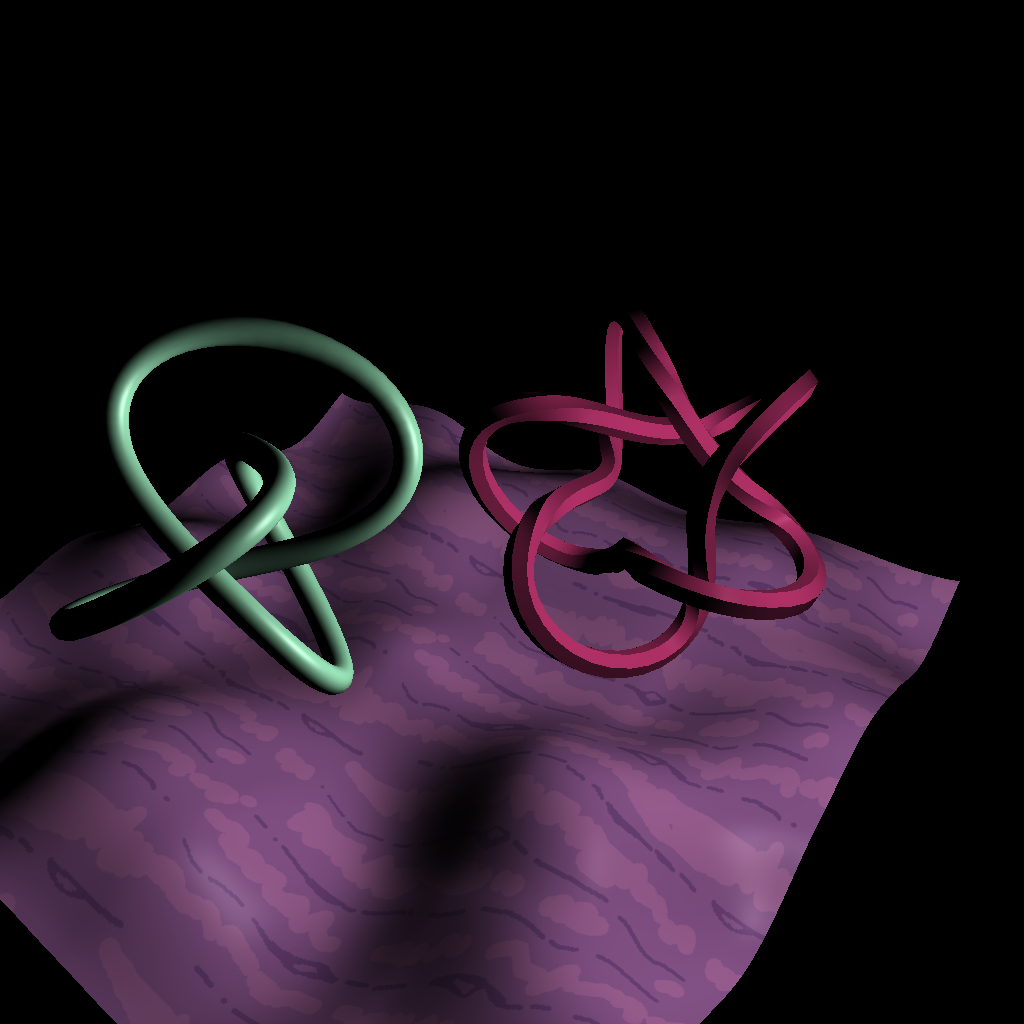

8d8c87c5

produces the following image:

Shadow mapping

| commit | love-demo @ 8d8c87c5..ba777fbf |

I then decided I wanted to experiment with fancier rendering pipelines, using

multiple framebuffers and render targets. Both can be configured

via love.graphics.setCanvas

(and this is one of the few Love2D graphics APIs that this project uses that is

not Love2D 12.0 -specific).

The shadow effect is achieved by first drawing the scene from the perspective

of the light source

(collada_scene.lua:267-275),

where

the output

from this render is stored in g_shadow_canvas rather than the

window/display framebuffer.

The same scene is then redrawn

(collada_scene.lua:277-293),

but with vertex positions instead being transformed by the camera's

transformation matrix. g_shadow_canvas is sampled by in

the Shadow

function in the pixel shader. PixelLightPosition is the current

pixel's coordinates in the light coordinate space (multiplied by the same light

transformation matrix used in the previous rendering step in the vertex

shader). Pixels that have a light-coordinate-space depth value that is larger

than the depth value as sampled from g_shadow_canvas are drawn with

reduced intensity.

Note that the animation of both the light source and the camera are interpreted directly from animation data as defined in the Collada scene description as exported from a 3D modeling tool.

ba777fbf

produces the following image:

Screen Space Ambient Occlusion

| commit | love-demo @ ba777fbf..761a0073 |

This range of commits includes the first that used multiple render

targets. love.graphics.clear

has a peculiar and undocumented/underspecified behavior in that it does not

clear all attached render targets.

After experimenting with love.graphics.clear's multiple render

target variant, I decided to deliberately avoid

using love.graphics.clear and instead implement my own clear

function

via pixel_clear.glsl. As

I documented in a previous blog, there is a

negligible or zero performance difference between my pixel_clear implementation

and glClear as implemented in Mesa, as both result in either nearly

or exactly the same commands being sent to the graphics unit, depending on the

type of clear.

Avoiding double-clearing is also one of the primary reasons I also replaced love.run in a later commit.

The rendering pipeline

in 761a0073

is:

- render to the color, position, and normal "geometry" buffers

- evaluate an SSAO kernel (sampling from the color/position/normal buffers), rendering the result to an "occlusion" buffer

- blur the occlusion buffer (removes noise introduce by the noise texture from the previous step)

- blend the occlusion buffer with the color buffer, rendering to the display buffer

In pixel_ssao.glsl, I evaluate kernel_depth twice per pixel. The goal of this is to simultaneously produce highlight and shadow effects, each with independently-tuneable parameters.

761a0073

also allows SSAO constants to be interactively tuned via the q/w/a/s/z/x and

arrow keyboard keys.

A variant

of 761a0073

produced the following image:

Voxel world rendering

| commit | love-demo @ 761a0073..3b4604c6 |

Though it was previously intended that I act as the team's primary 3D environment artist (and I originally had plans that involved environments exported via Collada scenes), it was around this time that I suddenly realized that there was absolutely no way that I could satisfactorily fulfill my "3D environment artist" role and the "3D engine developer" role simultaneously within the tiny game jam period.

In part for this reason, and mostly because Shiroiii is a better environment designer than me in any case, it was decided that we'd pivot the entire project to use an environment drawn in and exported from Minecraft Beta 1.7.3 (Shiroiii's preferred 3D environment drawing tool).

Most of the work done for parsing and transforming

Minecraft MCRegion

data is done as a pre-processing step,

in mc.py. mc.py

has several responsibilities:

- I did not plan to support voxel editing whatsoever,

so

mc.pycompletely ignores/removes all blocks that are "permanently occluded" due to having 6 non-air-block neighbors. - The blocks that were not culled are then converted to naive index and vertex buffers, which are then trivially rendered.

When the camera is submerged below the "surface" of the world, the effect of

the culling step becomes obvious: most of the "permanently occluded" blocks that

would otherwise exist between bedrock and the surface no longer exist, due to

being culled by mc.py.

Despite this, this approach is still not fast enough, and the demo footage shows this--my humble development laptop indeed draws this commit at about 10-20fps for a world that was originally 1024x128x1024 voxels.

3b4604c6

produces the following image:

Faster voxel world rendering: geometry shaders

| commit | love-demo2 @ d8443b3c..86890e2a |

The most obvious next step

after 3b4604c6

is, in addition to culling entire voxels, to also cull the individual cube faces

of each voxel based on whether that voxel face has an adjacent non-air

block.

There are several ways to implement this from a data modeling and rendering perspective, but the scheme that felt most interesting to me was to generate each of the possible 63 cube face occlusion cases in a geometry shader.

Love2D (including 12.0) does not provide a geometry shader API whatsoever.

Despite this missing feature, I refused to become discouraged. I thought this geometry shader idea was too cool to ignore, so I pitched to my team: "What if we completely subvert the entire Love2D rendering API, and call OpenGL directly from C code loaded via the luajit ffi?".

To my complete surprise, the team agreed to my proposal.

Recognizing this was a radical change in approach from the previous code, I decided to start a completely new repository, which I imaginatively named love-demo2. In the first commit, I wrote a "reference implementation" for voxel world rendering that does not use a geometry shader, so that I had a baseline to use to compare performance.

In the following commit, geometry shader is as cool as I hoped it would be. The most important part of the code is this:

int conf = gs_in[0].Configuration; if ((conf & 1) != 0) emit_face(vec3(-1.0, 0.0, 0.0), face_1); if ((conf & 2) != 0) emit_face(vec3(0.0, -1.0, 0.0), face_2); if ((conf & 4) != 0) emit_face(vec3(0.0, 0.0, -1.0), face_4); if ((conf & 8) != 0) emit_face(vec3(0.0, 0.0, 1.0), face_8); if ((conf & 16) != 0) emit_face(vec3(0.0, 1.0, 0.0), face_16); if ((conf & 32) != 0) emit_face(vec3(1.0, 0.0, 0.0), face_32);

Configuration is a 6-bit integer bit-field (one bit per cube

face), where a bit being set represents "this face is not occluded, and should

be drawn". The emit_face function then emits triangles for the face

represented by each cube configuration bit.

The configuration bitfields themselves were generated by a fairly-heavily-rewritten mc.py.

Everything about this seemed great. The only issue was that I also bothered

to measure performance, and I determined on all of my hardware configurations

that sending cube face occlusion data as a pre-calculated list of triangles is

about 30% faster than sending cube face occlusion data as a list

of Configuration bitfields with geometry shader -generated

triangles.

It appears the primary source of anti-fun is in the OpenGL specification itself, where geometry shaders are not allowed to reorder primitives. Because the output of each geometry shader is variable length and not known at the beginning of the instance draw, the implementation must buffer the result of all instanced draws before sending the result to the next stage in the rendering pipline (a pipeline stall).

With a few hundred thousand cube instances, the impact of this stall is significant enough to have a noticable (30%) impact on performance. Faced with the harsh reality of performance-testing data, I unceremoniously discarded the geometry shader approach starting in the next commit.

86890e2a

is graphically identical to the three previous commits, the only difference

between each being changes in relative performance/framerate.

Smaller voxel world rendering: grouping cube configurations by configuration

| commit | love-demo2 @ 094847ca |

The "performance baseline"

commit d8443b3c

is quite fast, and runs at 60fps on my humble development laptop. The issue with

this commit is that it also required a 760MB blob of vertex attributes (not

committed in the git repository) to represent all of the triangles in each

world, which even after compression (and two worlds) would have meant that the

Bibliotheca technical demo would have been a ~500MB download.

Feeling this was completely unacceptable, I designed a new approach: group each cube by "configuration", and "batch" instanced cube rendering by configuration, where each instanced draw call starts drawing triangles from differing offsets in the index buffer.

This "instanced rendering, where each render uses both a unique index buffer offset and unique index count" scheme is awkward if not completely impossible to achive with the Love2D Mesh API (one Mesh object per permutation? meh.).

Compared

to d8443b3c's

"~760MB per

world", 094847ca

only requires ~16MB to represent each world, and has similar/identical

performance characteristics.

094847ca

is graphically identical to the three previous commits.

Non-cube meshes

| commit | love-demo2 @ 094847ca..50188813 |

Most of this series of commits is about data modeling for "block IDs" that use non-cube meshes (and actually 3D modeling the non-cube meshes, even if trivial).

This also introduces deferred shading, which is used for real-time point lighting for each of the torches in the scene (this is not at all the the same lighting technique that Minecraft uses).

50188813

produces the following image:

Integer line-traced mouse picking

| commit | love-demo2 @ 91d86a4f |

My primary goal for this was to implement "traditional" mouse → voxel picking. However, rather than implement "real" raycasting, I thought it would be an interesting experiment to instead sample the world via by "integer line tracing" using Bresenham's line algorithm.

Its application as a replacement for raycast mouse-picking turned out to be less accurate than I hoped, but I do think the "3d line drawing" I implemented for debugging looks interesting.

The following commits do not use any of this "integer line tracing" code, and instead implement mouse picking by sampling the same geometry buffer that is already being generated for deferred shading--a pixel-exact and relatively inexpensive mouse picking implementation.

91d86a4f

produces the following image (use the left and right gamepad bumpers to draw

lines):

Voxel collision

| commit | love-demo2 @ f6bd9182..e08c74e0 |

Originally intended to be part of the mouse picking

implementation, 48a85671

also introduced "perfect hashing" for voxel worlds. Specifically, the position

(x/y/z) coordinates of all non-culled voxels are packed as 32-bit integers, then

fed to a perfect

hashing function -generating algorithm. The intent is to allow

for O(1) "does block x/y/z exist" checks without storing the voxels

in memory as a 3D array, nor resorting to a less efficient generalized hash

table.

Because I have several hundred thousand distinct voxel coordinates/keys that I want to hash, finding a suitable perfect hash function generator that wasn't either ridiculously bloated or too naive to deal with hundreds of thousands of keys was somewhat of a challenge.

Eventually I found nbperf, which is derived from NetBSD code (already a good sign), but modified to support integer keys. This was precisely what I was looking for.

The collision implementation itself is a combination of coarse bounding boxes and sphere → axis aligned bounding box collision testing, with sliding sphere collision response.

e08c74e0

collision features are identical to those demonstrated in the itch.io

release.

Bonus video: animated book

| commit | love-demo2 @ 2b379aa2 |

| Updated | 10 days ago |

| Status | In development |

| Platforms | Windows, Linux |

| Authors | FNicon, Pixel _Jamp, RSG0, purist, Shiroiii/Morning Dove |

| Made with | LÖVE |

| Tags | 3D, No AI |

| Average session | A few seconds |

| Languages | Japanese |

| Content | No generative AI was used |

Leave a comment

Log in with itch.io to leave a comment.